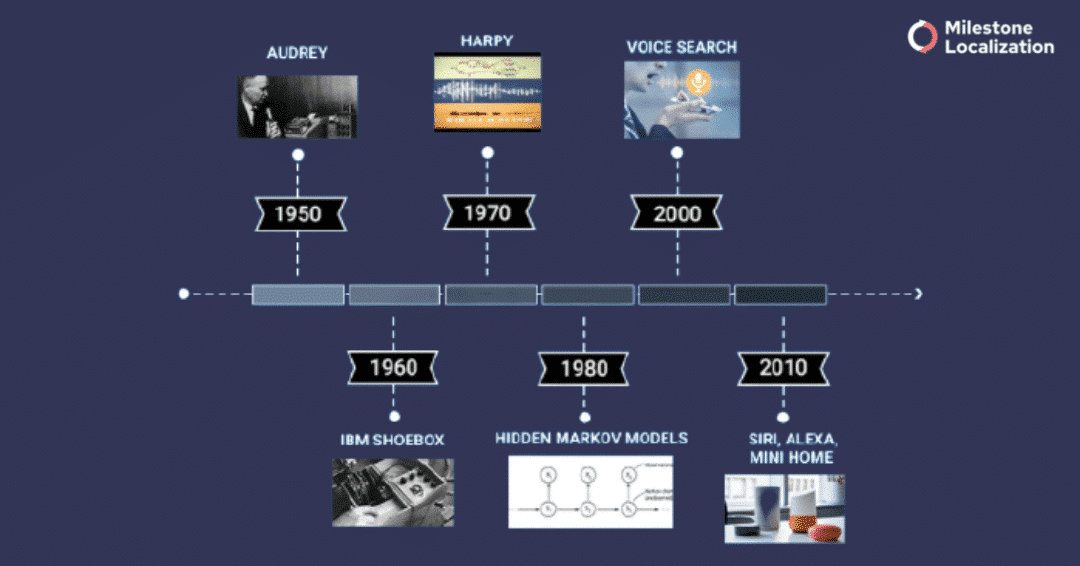

The first computer speech recognition system was invented in 1952. It was called Audrey and could understand only digits. A decade later, IBM’s Shoebox arrived with a vocabulary of 16 English words. Then in the 1970s, Harpy was invented by Carnegie Mellon and could understand over a thousand words.

Speech recognition systems have come a long way since then. Today, speech recognition has reached a new era with the help of enormous computing power and cloud services, coupled with Artificial Intelligence (AI).

The AI transcription services of today are doing what was once considered impossible. Yet, speech recognition still lacks the accuracy of the human transcriber.

And while speech recognition seems like an easy task – simply jotting down what is being said in an audio recording – background noise, heavy accents, and multiple speakers can make accurately automated transcribing an impossible affair.

Indeed, these are the areas where speech recognition is still lacking. overcome them, humans have to step into the picture. In this article, we’ll discuss why we still need humans for AI speech transcription.

What is Human Transcription?

Human transcription, as the name suggests, done by human transcriptionists. It is the act of listening to an audio file and converting it into written text.

Generally, human transcription is more accurate than speech transcription by AI since people can decipher jargon and accents better than any machine. Additionally, as compared to machines, humans also deal with background noise and multiple speakers more effectively.

All these advantages come at a price- human transcription is expensive and takes time. For a simple audio file, a human transcriptionist needs 3-5 hours to transcribe one hour of content.

Also Read: Machine Translation and Post Editing: Everything You Need to Know

What are the different types of transcription?

Generally, depending on its end use, there are three main types of transcription:

- Verbatim transcription: This is a word-for-word transcription of spoken language, which includes fillers like ‘ah’, ‘uh’, and ‘um’, throat clearing, as well as incomplete sentences.

- Intelligent verbatim transcription: The transcribed spoken language is filtered to make the meaning of what is being said clearer. It involves light editing to improve the overall grammar and style of the transcribed text.

- Edited transcription: The full script is edited and formalized for clarity and readability.

Timestamps in transcription

Some AI transcription tools feature automatic timestamps, also called time coding. A time code is a reference number to a specific time point in an audio recording. Timestamps are noted in the left margin of the document.

Time codes can save you time, money, and resources since you can easily search and find through the critical aspects of the recording. Timestamping, inserted at the necessary intervals according to the client’s needs. Transcripts can stamp every few seconds or every five minutes.

Timestamping is commonly used for captioning or subtitling a video as well as for legal procedures, such as witness statements, hearings, tribunals, and evidence interviews.

What is Speech Transcription by AI?

AI transcription services use software that has been trained on thousands of hours of human speech recordings. Such systems allow you to upload your audio file to the application, and it gets converted into written text.

This software is a powerful tool and thus, has become a popular choice among journalists, podcasters, students, etc. You can find a wide range of free AI transcription tools on the internet. For example, Transcribe is a free, open-source tool that you can use straight out of your browser. And FTW Transcriber and Express Scribe, on the other hand, are downloadable tools that you can install for free on either Mac or Windows.

Generally, the data being transcribed for AI can be in two forms:

- human-to-machine speech (e.g., voice commands)

- human-to-human speech (e.g., interviews, phone conversations).

Also Read: CAT Tool -Features & Benefits- Why Every LSP & Translator Should Use One

Advantages & Disadvantages of AI Transcription

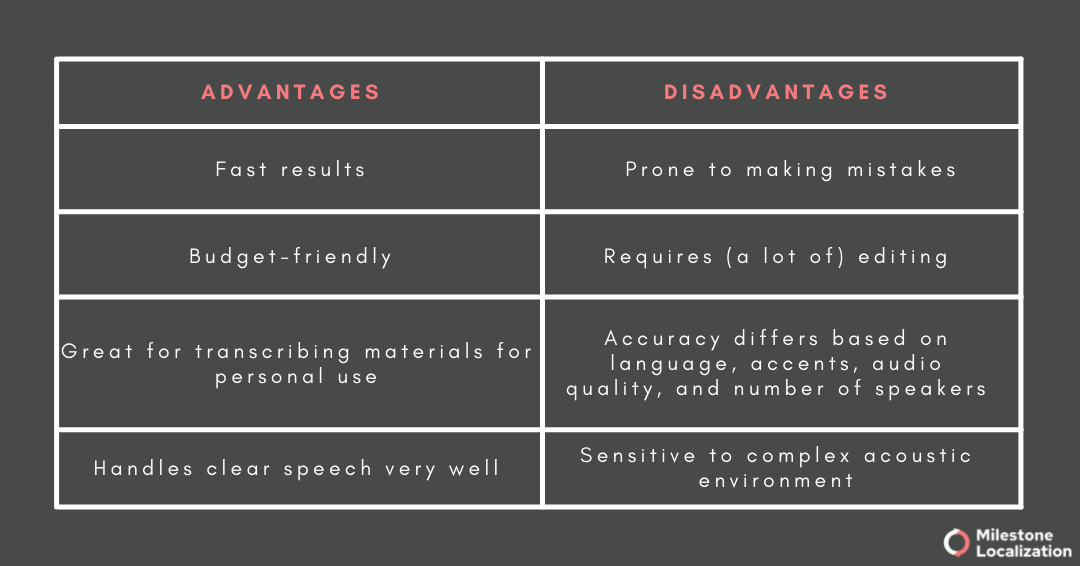

AI transcription services have their advantages and disadvantages. Knowing them will help you make the best decision for your needs.

When to use AI Transcription services?

This is a very important question since speech transcription for AI does not work as efficiently for every type of audio recording.

Generally, it is best to opt for AI transcription services if you:

- need a transcription immediately or in a relatively short period have a limited budget

- have a recording with clear audio and only 1-2 speakers

- the recording is only in one language, i.e., no language switching

- only need a rough draft of the recording

- have time to edit the transcription yourself

- want to search the audio recording for specific keywords or phrases

- are looking for specific quotes in the recording

Overall, speech transcription for AI is most suitable for audio recordings with just a few speakers, limited overlapping speech, and no background noise.

Do AI transcription tools work for all languages?

Unfortunately, AI transcription tools work best with widely spoken languages, such as English, Spanish, German, Hindi, and Russian.

While it is still possible to transcribe an audio file in less widely spoken languages, such as Bulgarian, Danish, and Czech, the quality of the output will likely be very poor.

Why is that?

As already mentioned, an AI transcription software is trained with thousands of hours of audio recordings for it to work well.

When there is not enough data for specific languages (e.g., Bulgarian), the transcription engine cannot be trained efficiently, and thus, it is prone to produce low-quality transcriptions.

What’s more, AI speech-to-text tools cannot handle more than one language at a time. This means that if your audio recording contains language switching, the system won’t be able to transcribe it properly.

So, if you want to transcribe an audio recording in a less widely spoken language, you are unlikely to get a good output with an AI transcription tool.

AI Speech-to-Text vs. Human transcription – Which one to choose?

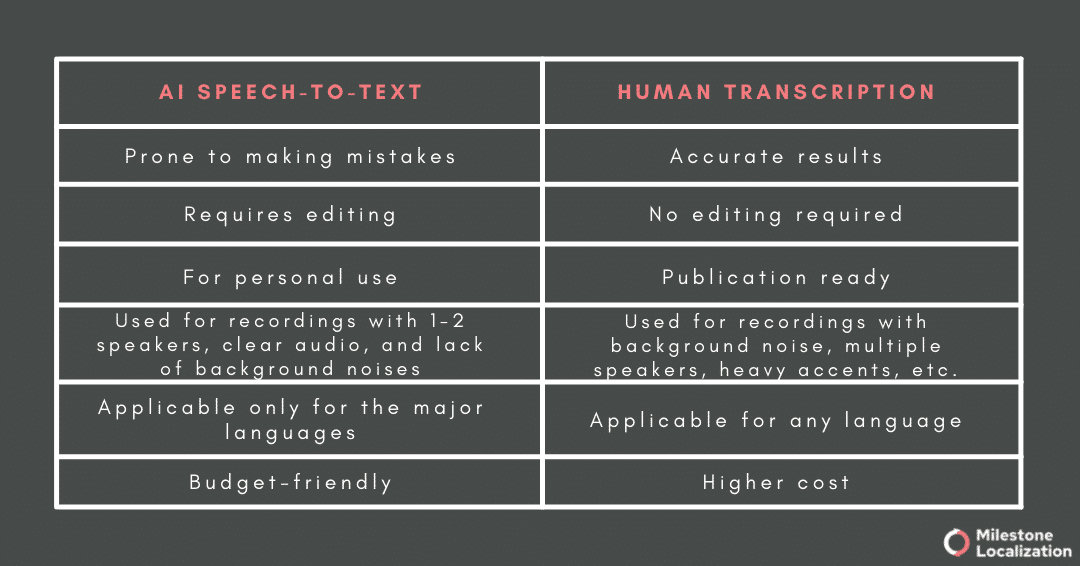

AI Speech-to-Text or Human transcription? If you don’t know which one to select, it is essential to understand that both the services are used for different needs.

Have a look at the table:

As you can see, both these services have certain advantages and disadvantages. Thus, it all boils down to your priorities and desired output.

Most clients that choose an AI transcription service need the script in a short period and for their personal use. This means that any errors in the transcription are not a problem as long as they do not hinder comprehension.

Still, if you have the time to edit the output yourself, you can always save some money by opting for AI transcription.

On the other hand, clients invest in human transcription services because their audio recording is unsuitable for AI transcription.

This means that it might contain too many background noises, or it might include several speakers. What’s more, if you want to publish transcribed materials, it is always best to use human transcription.

Can AI and Human Transcription Work Together?

Let’s face it – automatic speech recognition is not perfect. However, it is quick and cost-effective. Human transcribers and AI can work together to create the fastest and most efficient workflow.

How could that be accomplished, though?

It’s pretty simple. First, the audio recording is transcribed with AI transcription software. Next, a human transcriptionist goes through the text and corrects it. They might add omitted words or phrases, fix errors, and edit the overall layout of the text.

The result is a perfect quality transcription produced in a much shorter period and at a lower cost. Clearly, you don’t need to sacrifice quality for time and cost.

You can get the complete package by opting for the most efficient workflow of machine transcription + human editing.

Common AI Speech Transcription Challenges and Solutions

AI has a bright future ahead of itself, and thus, a lot of effort is put into developing this system. Here is an outline of the biggest challenges for ASR and its solutions. As you will see, most of the solutions require a human transcriptionist.

- Conversations with multiple speakers: ASR fails to produce quality output when the audio recording contains multiple speakers. To overcome this problem, human transcribers are often asked to label context cues or underlying sentiments being expressed to improve the ASR system’s output quality.

- Linguistic change: Languages never stop evolving, and new words keep developing. Since ASR cannot recognize unknown words, human linguists need to help the system.

Also read: Emerging Trends in Translation Technology

- Code or language switching: This term is used to denote the alternation between two languages or dialects in the context of the same conversations. ASR cannot deal with more than one language at a time. To overcome this issue, one could assign one bilingual or multiple transcriptionists to deal with the different languages in the recording.

- Complex subject matters: In some cases, 100% accuracy is a must. For instance, if you are working on developing a medical device or medical software and you need to transcribe speech data, even the slightest mistake can be fatal. Thus, working with human transcriptionists with medical expertise to review the ASR transcription would be the most efficient option.

- Complex acoustic environment: Depending on your needs, you might want to have all background noises noted down in brackets or you might ignore these details. In either case, you will need a human transcriptionist because AI speech transcription cannot decipher background noises. AI speech transcription cannot ignore or name background noses. In fact, when the acoustic environment is too loud, it might even hinder ASR. Human transcribers, on the other hand, handle complex acoustic environments with a minimum of 99% accuracy.

- Transcription style: This term includes anything from abbreviations to punctuation. Sometimes abbreviations should be spelled out because otherwise, they might obscure the meaning. For this purpose, you will need human transcriptionists who know or can research what a specific abbreviation stands for. The same goes for punctuations.

For instance, there are no set rules for using exclamation marks. They might be used for the sole purpose of capturing an emotion or for emphasis. A machine cannot make a difference, so human transcriptionists have to take over.

How humans improve the technology behind voice AI

- Improvement of Automated Speech Recognition (ASR) accuracy for human-to-human conversations: Recent research suggested that the word error rate (WER) for ASR used to transcribe business phone conversations ranged from 13-23%, which is a pretty high percentage. The researchers suggested that ASR handles interactions between a machine and a human quite well since people tend to speak more clearly and slowly. However, in person-to-person phone calls, this is not the case. As a result, ASR developers often use human transcribers to improve ASR accuracy for human-to-human conversations.

- Development of more inclusive technology: In its initial phase, voice assistants such as Siri and Alexa supported only clear commands in American English. To expand their customer base, the developers added new accents and dialects. Nevertheless, voice assistance continues to receive a lot of criticism for their gender and race biases.

To overcome these prejudices and develop a more inclusive technology, we need speech data from people with various demographic backgrounds. To utilize this data, however, we need human transcriptionists who can capture the most subtle differences between accents and dialects. It is a task that the AI still finds difficult, if not impossible, to fulfill. - Overcoming complex environments: ASR finds it hard to handle complex acoustic environments, such as background noise, overlapping speech, and poor audio quality. As already mentioned, ASR functions pretty well in quiet environments, such as an office or a bedroom. But what about cars, busy workplaces, or on the street? To overcome these challenges, we need human transcribers to deal with complex acoustic environments significantly better than machines.

In conclusion

AI speech and transcription technology are evolving fast, with big tech companies spending billions of dollars on speech recognition systems. In the years to come, as more training data becomes available, we will make big strides in automated speech recognition and transcription.

For now, speech-to-text technologies and human transcribers make the perfect team, combining accuracy and speed to produce quality transcriptions. In other words, post-editing can be approached as the solution to AI’s imperfections.

Need help with your transcription project? Contact us for a free consultation.